Welcome to the first post of our artificial intelligence series. In this post, we will understand the fundamentals of basic neural networks and the motivation from biological models.

Introduction

As artificial intelligence becomes increasingly commonplace, it’s important to understand the basics of how these systems work. In this post, we’ll take a look at artificial neural networks, which are a type of AI that is modeled after the brain. We’ll see how they work and discuss some of their potential applications.

Artificial Neural Network

An artificial neural network (ANN) is a neural network model inspired by the way biological nervous systems, such as the brain, process information. The key element of this paradigm is the artificial neuron, which mimics its biological counterpart in terms of structure and function.

ANNs are used to model complex patterns in data, such as handwritten characters or images. In recent years, they have been successfully applied to a variety of tasks, including speech recognition, image classification, and machine translation.

How Biological Neurons Work

Let us first examine a simplified version of a biological neuron. Scientists learned a lot about how a single neuron works by studying the neuron of a giant squid, whose neurons can be really big, nearly 1mm wide, and easily visible with naked eyes. Their axons are sometimes more than a meter long.

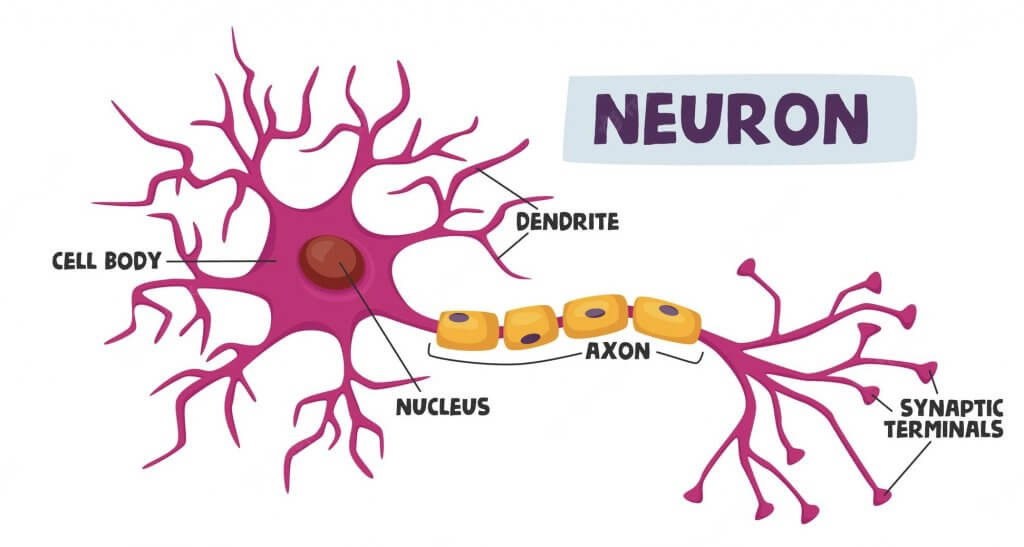

Here is a simplified view of a biological neuron:

Our brain is made up of billions of interconnected neurons, that exchange information with one another. Each neuron receives input from several other neurons and produces an output that is passed on to other neurons in the network.

Simply put, a neuron receiving signals is like an electricity conductor. The neuron has a cell body that contains the nucleus as well as other organelles. The cell body also has dendrites acting like branching wires that receive incoming electrical impulses from other neurons. These impulses travel down the dendrites to the cell body where they are either passed on or not.

If the impulse passes a threshold (around -55mV), it will trigger an action potential. An action potential is like an electrical charge that moves down the length of the neuron’s axon. The axon is a long, thin fiber that transmits electrical impulses away from the cell body to other neurons or muscles. So a single neuron will get multiple input signals from each of its dendrites, then its cell body will “sum” all these signals, and then check if it passes a certain threshold. If it does, it “fires” or passes the signal down via its axon to other connected neurons.

How Artificial Neurons Work

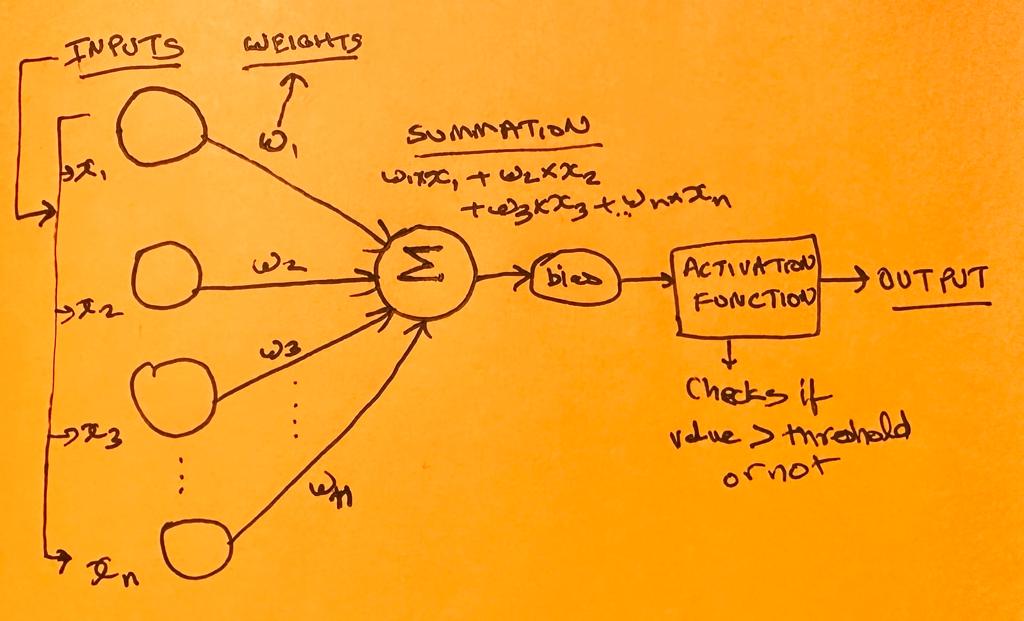

An artificial neuron is a mathematical model of a biological neuron. Just like their biological counterparts, the artificial neuron sums the input signals and produces an output signal if the sum is greater than a threshold value. Otherwise, no output signal is produced.

The connections between the artificial neurons are called weights, and they determine how strongly each neuron affects the output. Weights represent the strength of the input signals. The weights can be positive or negative. A positive weight represents an excitatory input, and a negative weight represents an inhibitory input. The weights are learned by adjusting them so that the neural network makes accurate predictions on a training set of data. After the weights have been learned, the neural network can be applied to a test set of data to evaluate its performance.

When a neural network is presented with an input, each neuron calculates its output based on the inputs it receives and the weights of the connections between the neurons. The outputs of all the neurons are then combined (summed up) to produce the final output of the network using an activation function.

Here are the simplified steps:

1. Set Input: Each input is basically a feature of the dataset. Let’s say were are trying to make a neural network to identify pictures, then the RGB value of each pixel of a photo (that is to be checked) can be the input.

2. Initialise Weights: The weights for each of the input nodes in ANNs are initialized randomly at the beginning. Remember, each node is like a neuron and the weight can be treated as the strength of each input signal.

2. Summation: Multiply each input node value by its weight. Then sum up all these values.

3. Bias: We need to add a bias to this sum (we will know more about this later). The bias gets added to the weighted sum before being passed to the activation function. Each node will have its own bias value. Just like weights, bias values can be assigned randomly at the start, and usually, they are set to 0 at the start for all nodes.

4. Activation Function: This weighted input sum with the bias added gets passed onto an activation function. This function acts like a threshold and gives a certain output. The output could be zero (which means the neuron did not fire after receiving all the input signals of a particular strength), any small value, or any large value.

This output can be used to make predictions or decisions based on the input data.

Applications of Neural Networks

Neural networks can be used for a variety of tasks, including pattern recognition, classification, and prediction. They are often used in image recognition applications such as facial recognition and object detection. They can also be used for handwriting recognition and text-to-speech synthesis. Neural networks have also been used to create chatbots and artificial intelligence assistants such as Microsoft’s Cortana and Amazon’s Alexa.

Conclusion

Artificial neural networks are powerful tools that can be used for a variety of tasks. In this post, we’ve seen how they work at a very simple level. Stay tuned for more posts in our artificial intelligence series where we’ll explore further details.